Why Does Computer Use Binary? Unveiling the Digital Language

Computers use binary because it’s the simplest and most reliable way to represent information using electrical signals: on or off, 1 or 0. This reduces errors, simplifies circuit design, and enables efficient data storage and processing.

The Foundation of Digital Computation: Understanding Binary

The question of why does computer use binary? is fundamental to understanding how modern technology works. At its core, a computer is an electronic machine, and like any machine, it requires a reliable and straightforward method of operation. Binary code provides this essential framework, translating complex instructions and data into a language that electrical circuits can easily interpret. This section delves into the rationale behind this crucial choice, exploring the benefits, historical context, and practical implications of using binary within computer systems.

Simplicity and Reliability: The Cornerstones of Binary’s Dominance

The primary reason for choosing binary stems from its inherent simplicity. Consider the alternatives – using ten different voltage levels to represent the decimal digits (0-9) would be incredibly complex and prone to errors. Small fluctuations in voltage could easily lead to misinterpretation. Binary, however, only requires differentiating between two states: on (represented as 1) and off (represented as 0).

This inherent reliability is critical. Electronic components are not perfect; they are subject to noise, manufacturing variations, and environmental conditions. A system designed to distinguish between multiple voltage levels would be extremely sensitive to these imperfections, leading to frequent errors. By using only two states, the system becomes significantly more robust and less prone to errors. The distinction between “on” and “off” is easily discernable, even with some level of noise present.

Implementing Binary with Electronic Circuits

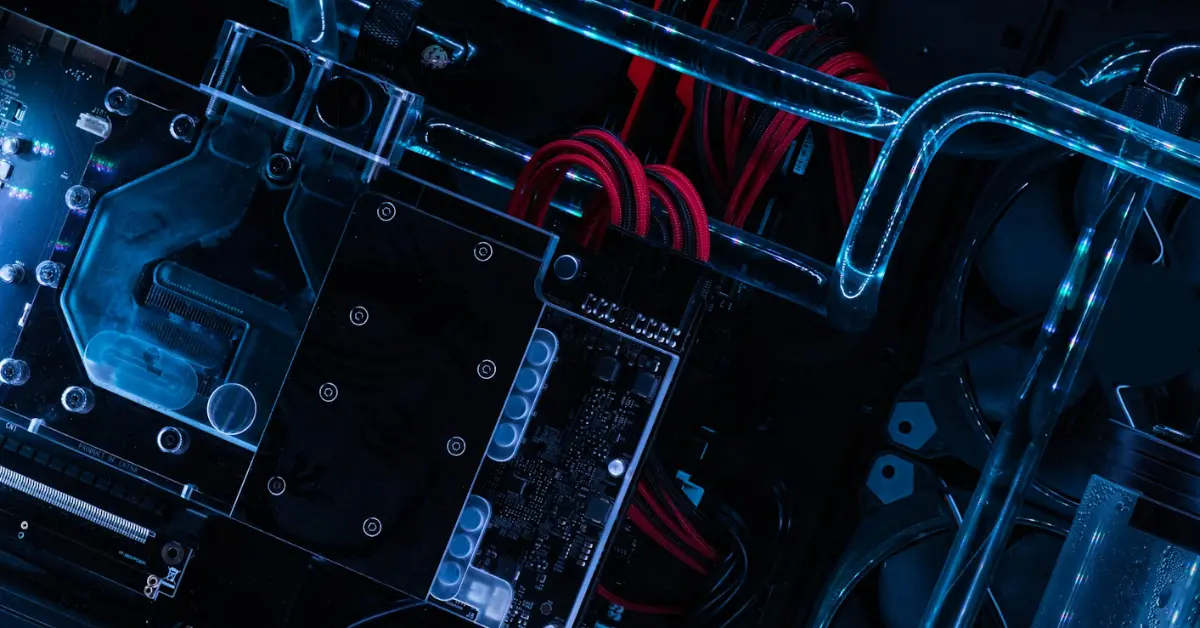

Implementing binary with electronic circuits is relatively straightforward. A switch can be either on (representing 1) or off (representing 0). Transistors, the fundamental building blocks of modern computers, function as electronic switches. By combining millions or even billions of transistors, incredibly complex circuits can be built to perform calculations and manipulate data, all based on these simple on/off states.

Logic gates, such as AND, OR, and NOT gates, are implemented using transistors and operate on binary inputs to produce binary outputs. These gates form the basis of all digital circuits, allowing computers to perform arithmetic operations, logical comparisons, and data manipulation.

The Historical Context: From Vacuum Tubes to Transistors

The choice of binary wasn’t arbitrary. Early computers, built with vacuum tubes, relied on the principle of whether or not a tube was conducting electricity. This readily translated into a binary system. While technology has significantly advanced since then, the fundamental principle of representing information using two states has remained. Transistors, much smaller and more efficient than vacuum tubes, continued this tradition, solidifying binary as the core language of computing.

Benefits of Using Binary in Computers

Using binary offers several key benefits:

- Simplicity: Only two states (0 and 1) are needed.

- Reliability: Easy to distinguish between the two states, reducing errors.

- Ease of Implementation: Simple electronic circuits can represent binary data.

- Mathematical Basis: Binary arithmetic is well-defined and easy to implement.

- Logic Gate Compatibility: Binary logic is directly compatible with logic gates (AND, OR, NOT).

Common Misconceptions about Binary

One common misconception is that computers “think” in binary. While computers process information using binary representation, programmers work with higher-level languages (like Python, Java, or C++) that are much easier for humans to understand. These languages are then translated into machine code, which is ultimately represented in binary.

Another misconception is that binary is inherently difficult to understand. While working directly with binary numbers can be challenging, the underlying concept is relatively simple. With some practice, converting between binary and decimal can become straightforward.

Frequently Asked Questions (FAQs)

Why is binary called “base-2”?

Because it uses only two digits, 0 and 1. Decimal is called base-10 because it uses ten digits (0-9). Similarly, hexadecimal is base-16. Base-2 accurately reflects that each place value in a binary number represents a power of 2 (e.g., 20, 21, 22, etc.).

How do computers represent text using binary?

Computers use character encoding schemes such as ASCII or Unicode to represent text. Each character is assigned a unique numerical value, which is then represented in binary. For example, the letter “A” might be represented by the number 65, which is 01000001 in binary.

Can computers use other number systems besides binary?

While theoretically possible, it’s not practical. Although some specialized computing systems might use ternary (base-3) or other number systems, binary is the dominant choice due to its simplicity and reliability in electronic implementations. The cost and complexity of building reliable circuits for other number systems are significantly higher.

How is binary used in memory storage?

Computer memory (RAM, ROM, hard drives, SSDs) stores data as binary information. Memory cells, typically implemented with transistors or magnetic materials, store either a 0 or a 1. These individual bits are then grouped together to form bytes (8 bits), kilobytes, megabytes, gigabytes, and terabytes.

What is a bit, and why is it important in binary?

A bit is the fundamental unit of information in computing, representing a single binary digit (0 or 1). The word “bit” is a contraction of “binary digit.” All data and instructions are ultimately broken down into bits for processing and storage within a computer system.

How is binary used in networking?

In networking, data is transmitted as streams of bits. Network protocols define how these bits are organized and interpreted. For instance, IP addresses, MAC addresses, and data packets are all ultimately represented and transmitted as binary data.

Is binary code the same as machine code?

They are closely related, but not exactly the same. Machine code is the lowest-level programming language, directly executable by the CPU. It consists of binary instructions that tell the CPU what to do. Binary code, in general, is simply the representation of data and instructions using 0s and 1s.

Why doesn’t a computer use decimal numbers directly?

Creating hardware that can reliably distinguish between ten different voltage levels is far more complex and error-prone than distinguishing between just two. The increased complexity translates into higher costs, lower reliability, and slower performance.

How are negative numbers represented in binary?

Several methods exist to represent negative numbers in binary, including sign-magnitude, one’s complement, and two’s complement. Two’s complement is the most common method because it simplifies arithmetic operations and has a single representation for zero.

How do computers perform arithmetic operations using binary?

Computers use logic gates and binary arithmetic rules to perform addition, subtraction, multiplication, and division. Binary addition, for example, follows simple rules similar to decimal addition but with only two digits (0 and 1). These basic operations can be combined to perform more complex calculations.

What are the limitations of using binary?

While binary is ideal for hardware implementation, it can be cumbersome for humans to work with directly. Long binary strings can be difficult to read and interpret. This is why programmers use higher-level languages that are more abstract and easier to understand.

What’s the future of binary in computing? Will it ever be replaced?

While research into alternative computing paradigms like quantum computing or neuromorphic computing is ongoing, binary is likely to remain the dominant system for the foreseeable future. Its simplicity, reliability, and well-established infrastructure make it difficult to replace entirely, at least in traditional computing applications. The advantages of why does computer use binary? are just too important.