How Long Does AI Image Generation Take? The Evolving Timeline

The time it takes to generate an image using AI varies greatly, from a few seconds to several minutes, depending on factors like model complexity, hardware resources, and desired image quality. Typically, simpler images generate faster, while complex and detailed results require more processing time.

Understanding AI Image Generation

Artificial Intelligence (AI) image generation has rapidly evolved, transforming how we create visual content. Gone are the days when sophisticated graphic design skills were the sole domain of professionals. Now, anyone can input a text prompt and receive a unique image in return. Understanding the nuances of this process is key to appreciating the time involved and optimizing your experience.

The Core Elements Affecting Generation Time

Several key factors determine how long does AI image generation take? Understanding these variables empowers you to manage expectations and potentially expedite the process.

- Model Complexity: Sophisticated models with billions of parameters (like Stable Diffusion or Midjourney) inherently require more computational power.

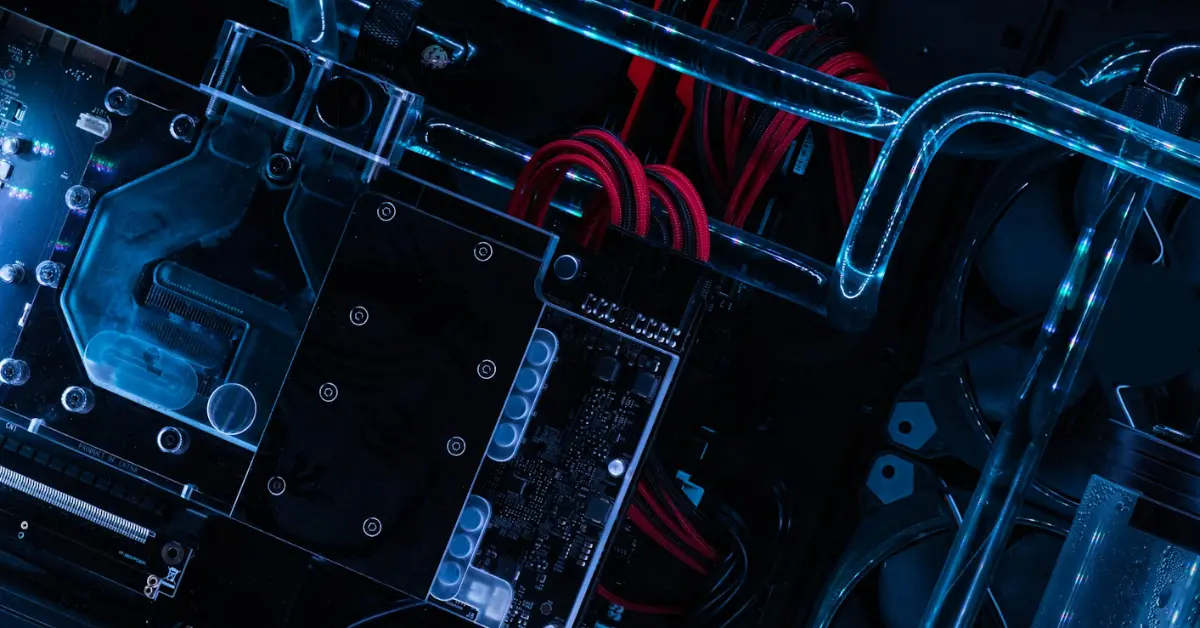

- Hardware Resources: A powerful GPU (Graphics Processing Unit) significantly reduces processing time compared to a standard CPU.

- Image Resolution: Generating high-resolution images demands more processing power and, therefore, takes longer.

- Prompt Complexity: Intricate prompts with multiple subjects, styles, and modifiers require the AI to perform more calculations, increasing the generation time.

- Server Load: The demand on the AI’s servers impacts performance; peak hours often result in longer wait times.

The Typical AI Image Generation Process

While the specifics vary depending on the platform, the AI image generation process generally follows these steps:

- Prompt Input: The user provides a textual description of the desired image.

- Prompt Processing: The AI analyzes the prompt and translates it into a numerical representation.

- Image Generation: The AI uses its learned parameters and the numerical representation to create the image. This is the most computationally intensive stage.

- Refinement (Optional): Some platforms offer options to refine the generated image, further increasing generation time.

- Output: The final image is presented to the user.

The Impact of Subscription Tiers and Free Trials

Many AI image generation platforms operate on a subscription basis, with different tiers offering varying levels of computational resources and faster processing times. Free trials, while valuable for testing the software, often come with restrictions on generation speed and image resolution. These limitations affect how long does AI image generation take? and your overall experience.

Common Mistakes That Increase Generation Time

Users sometimes inadvertently increase image generation time through inefficient practices. Awareness of these pitfalls can help streamline the process.

- Overly Complex Prompts: While detail is appreciated, excessively long or convoluted prompts can confuse the AI and slow down generation.

- Unrealistic Expectations: Demanding extremely high resolution or unusual styles might push the AI beyond its capabilities, resulting in longer processing times or failed attempts.

- Ignoring Server Load: Generating images during peak hours can significantly increase wait times.

- Insufficient Hardware: Relying on underpowered devices will inevitably lead to longer generation times, particularly for complex images.

Benchmarking AI Image Generation Speeds

A precise answer to “How long does AI image generation take?” is difficult, because it fluctuates constantly. However, a typical benchmark (using a mid-range gaming PC with a dedicated GPU) might look like this:

| AI Model | Image Resolution | Prompt Complexity | Generation Time (Approximate) |

|---|---|---|---|

| DALL-E 2 | 512×512 | Simple | 5-10 seconds |

| DALL-E 2 | 1024×1024 | Complex | 20-30 seconds |

| Stable Diffusion | 512×512 | Simple | 2-5 seconds |

| Stable Diffusion | 1024×1024 | Complex | 10-20 seconds |

| Midjourney | Variable | Simple | 15-30 seconds |

| Midjourney | Variable | Complex | 30-60 seconds |

Optimizing Your Workflow for Faster Results

Several strategies can help reduce image generation time.

- Use a Powerful GPU: Investing in a dedicated GPU with ample VRAM is the most effective way to accelerate the process.

- Optimize Prompts: Keep prompts concise and clear, focusing on the most important details. Experiment with different phrasing to find what works best.

- Generate in Off-Peak Hours: Avoid generating images during times of high server load.

- Consider Lower Resolution: Generating images at a lower resolution can significantly reduce processing time, especially during iterative design. You can upscale later if needed.

- Experiment with Different AI Models: Each model has its strengths and weaknesses. Some models are faster at generating certain types of images.

Staying Updated on the Latest Advances

The field of AI image generation is rapidly evolving. New models and techniques are constantly being developed, leading to faster generation times and improved image quality. Staying informed about these advancements will help you leverage the latest tools and optimize your workflow.

FAQs: Decoding AI Image Generation Time

What is the fastest AI image generator available?

The speed of AI image generators is constantly changing with advancements in technology. Currently, Stable Diffusion tends to be one of the faster models, especially when running locally on a computer with a powerful GPU. However, cloud-based services like DALL-E 2 and Midjourney also provide relatively quick generation times, often within seconds, especially for simpler prompts.

Does the size of the generated image impact the processing time?

Yes, the size of the generated image is a significant factor affecting processing time. Larger images require more computational power to generate and, therefore, take longer. Generating a 1024×1024 image, for instance, will typically take considerably longer than a 512×512 image using the same AI model and hardware.

How much does a good GPU improve AI image generation speed?

A good GPU can drastically improve AI image generation speed, often by a factor of 10x or more. Dedicated GPUs, especially those with high VRAM, are designed for parallel processing, making them ideal for the complex calculations involved in AI image generation. Using a CPU alone for image generation is often significantly slower and can be impractical for complex prompts.

Can I use AI image generators on my phone?

Yes, many AI image generators offer mobile apps or web-based interfaces accessible on smartphones. However, image generation on phones is generally slower than on computers with dedicated GPUs due to the limited processing power. Some services utilize cloud computing to offset this limitation, but results are often still slower than on a high-end desktop.

Does the number of steps in Stable Diffusion affect the generation time?

Yes, the number of steps in Stable Diffusion directly affects generation time. Each step represents an iterative refinement of the image, and more steps lead to higher quality but also longer processing times. Users can adjust the number of steps to balance speed and image quality.

Are there any free AI image generators that are reasonably fast?

Several free AI image generators are available, but their speed and capabilities can vary greatly. Some free services use shared resources, which can lead to longer wait times. However, models like Stable Diffusion can be run locally on a personal computer, giving you potentially faster results if you have adequate hardware, though it requires more technical setup.

How does server load affect AI image generation time?

High server load significantly increases image generation time. When many users are simultaneously requesting images, the AI’s servers become congested, leading to longer wait times for everyone. Generating images during off-peak hours can help avoid this slowdown. This impacts how long does AI image generation take?

Is it faster to generate multiple images at once or one image at a time?

The answer depends on the AI model and platform. Some platforms allow for batch processing, where multiple images are generated simultaneously. This can be faster overall than generating them individually because it utilizes the hardware more efficiently. However, the total processing time will still be longer than generating a single image.

How can I optimize my prompts for faster generation?

Optimizing prompts involves keeping them concise, clear, and specific. Avoid overly complex or ambiguous language. Experiment with different phrasing to see what works best with the AI model you are using. Focusing on key details and omitting unnecessary adjectives can also help speed up the process.

What is latent diffusion, and how does it impact image generation time?

Latent diffusion is a technique used in AI image generation that performs diffusion processes in a lower-dimensional latent space, rather than directly in the pixel space. This significantly reduces the computational burden, leading to faster generation times and lower memory requirements. Models like Stable Diffusion utilize latent diffusion for efficient image creation.

How does image upscaling impact the overall time taken?

While image generation happens initially at a lower resolution, upscaling to a higher resolution adds additional time, dependent on the upscaling algorithm used. A simple algorithm can upscale the image faster, but may impact image quality. More complex algorithms can take longer but will create a better result.

Why does it sometimes take longer to generate an image even with the same prompt?

Variations in server load, background processes on your machine, and subtle differences in the AI model’s internal state can all lead to fluctuations in generation time, even with the same prompt. The nature of the process is inherently probabilistic, and minor variations are to be expected. Therefore, how long does AI image generation take? is not always consistent.