How Character AI Bots Work: Unveiling the Magic

How do character AI bots work? These sophisticated AI companions are powered by large language models and neural networks, trained on massive datasets of text and code to simulate realistic conversations and personalities based on specific characters.

The Genesis of AI Companions: A Brief History

The concept of artificial intelligence capable of conversing and exhibiting distinct personalities has captivated the human imagination for decades. Early attempts at creating conversational AI, like ELIZA, relied on simple rule-based systems. However, the advent of deep learning and powerful neural networks has revolutionized the field, paving the way for character AI bots that exhibit far more nuanced and engaging behaviors. Today, character AI bots are used for entertainment, education, and even mental health support, demonstrating their increasingly significant role in our digital lives. The rapid advancement in this field promises even more sophisticated and personalized AI interactions in the future.

The Core Technology: Large Language Models (LLMs)

At the heart of most character AI bots lies a large language model (LLM). These models, such as GPT-3, LaMDA, and others, are trained on vast datasets of text and code. This training allows them to predict the probability of the next word in a sequence, given the preceding words. In essence, they learn the patterns and structures of human language.

Training a Character: From Data to Personality

The process of creating a character AI bot involves more than just using a pre-trained LLM. The bot needs to be specifically trained on data relevant to the character’s desired personality and background. This can include:

- Books and scripts: For fictional characters, the bot can be trained on the books or scripts in which they appear.

- Interviews and biographies: For real-world figures, the bot can be trained on interviews, biographies, and other publicly available information.

- Custom data: Developers can create custom datasets that define the character’s traits, opinions, and speaking style.

This fine-tuning process shapes the LLM to generate text that is consistent with the character’s persona.

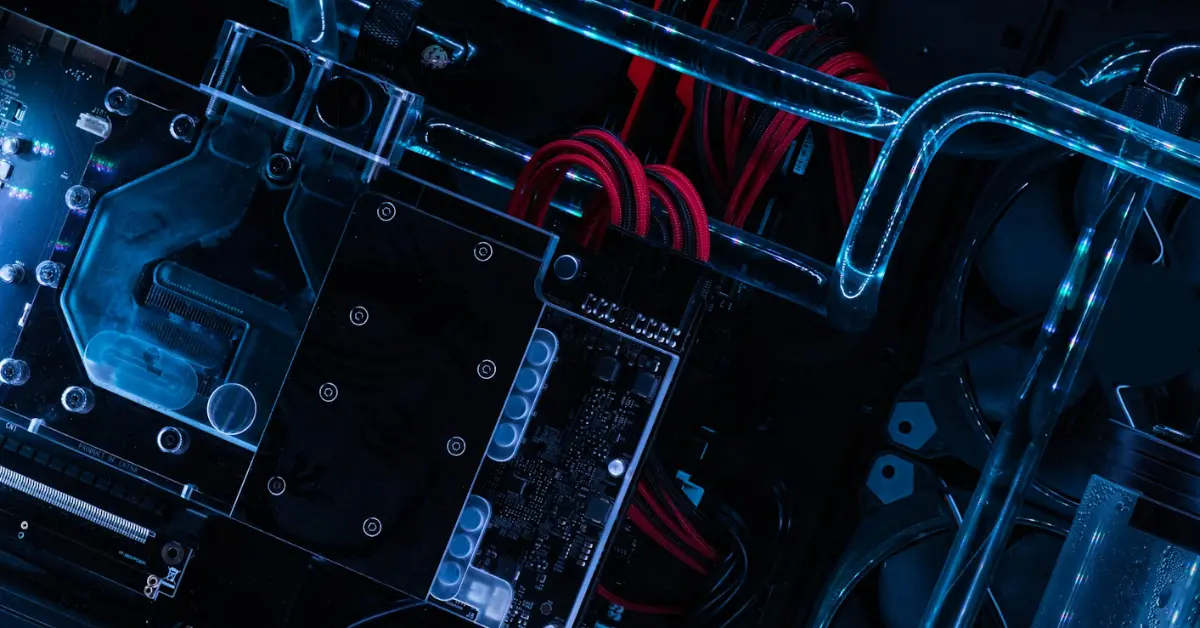

The Architecture: Neural Networks and Transformers

LLMs are typically based on neural networks, particularly the transformer architecture. Transformers excel at processing sequential data like text, allowing them to understand the context and relationships between words in a sentence. They achieve this through a mechanism called attention, which allows the model to focus on the most relevant parts of the input sequence when generating the output. The key components are:

- Embedding Layer: Transforms words into numerical representations that the network can understand.

- Transformer Layers: Process the embeddings using attention mechanisms to capture relationships.

- Output Layer: Generates the next word in the sequence based on the processed information.

The Interaction Process: A Step-by-Step Guide

How do character AI bots work in a practical setting? The interaction process generally follows these steps:

- User Input: The user types a message to the character AI bot.

- Encoding: The bot’s input encoder converts the message into a numerical representation.

- Contextualization: The LLM processes the encoded message, taking into account the conversation history and the character’s profile.

- Response Generation: The LLM generates a response, predicting the most likely sequence of words based on its training data and the contextual information.

- Decoding: The bot’s output decoder converts the numerical representation of the response back into human-readable text.

- Display: The response is displayed to the user.

Common Challenges and Future Directions

Creating effective character AI bots presents several challenges:

- Bias: LLMs can inherit biases from their training data, which can lead to the bot generating offensive or inappropriate responses.

- Consistency: Maintaining consistency in the character’s personality and knowledge base can be difficult, especially in long conversations.

- Creativity: While LLMs can generate plausible text, they may lack true creativity and originality.

Future research directions include developing more robust methods for mitigating bias, improving the consistency of character behavior, and enhancing the bot’s ability to generate novel and engaging content. The continuous improvement of AI safety protocols is also paramount.

Table: Comparing Different LLM Architectures

| Feature | GPT-3 | LaMDA |

|---|---|---|

| Architecture | Transformer | Transformer |

| Focus | General-purpose language generation | Dialogue-focused language generation |

| Training Data | Massive dataset of text and code | Conversation-specific datasets |

| Strengths | Versatility, coherence | Fluency in dialogue, grounding in facts |

| Weaknesses | Can be verbose, prone to hallucination | May struggle with nuanced reasoning |

Frequently Asked Questions (FAQs)

What are the ethical considerations of using character AI bots?

Ethical considerations include potential bias in the training data leading to discriminatory or offensive responses, the risk of users forming emotional attachments to AI characters without understanding their non-human nature, and the potential for misuse in spreading misinformation or manipulating users. Responsible development and deployment are crucial.

How is the “personality” of a character AI bot defined?

The personality is defined through a combination of training data, prompt engineering, and specific parameters that govern the bot’s behavior. This can include details about the character’s background, beliefs, speaking style, and relationships with others. Careful curation of training data is paramount.

Can character AI bots be used for therapeutic purposes?

Yes, but with caution. While they can provide companionship and support, they should not replace professional mental healthcare. Ethical guidelines must be followed to avoid harm.

What is “prompt engineering” in the context of character AI bots?

Prompt engineering refers to the process of crafting specific prompts or instructions that guide the LLM to generate the desired responses. This involves carefully wording the input to elicit the desired behavior from the bot and shape its personality. It is a crucial step in defining the bot’s conversational style.

How do character AI bots handle sensitive topics or potentially harmful content?

Developers implement various safety mechanisms, such as content filters and moderation systems, to prevent the bot from generating harmful or offensive content. These systems are designed to detect and block inappropriate inputs and outputs, ensuring responsible use.

What are the limitations of current character AI technology?

Current limitations include a lack of genuine understanding and consciousness, reliance on pattern recognition rather than true reasoning, and susceptibility to biases in the training data. They can also sometimes generate incoherent or nonsensical responses.

How much data is typically needed to train a character AI bot effectively?

The amount of data required varies depending on the complexity of the desired character and the capabilities of the LLM. Generally, the more data available, the better the bot’s ability to mimic the character’s personality and speaking style. Large datasets lead to more convincing results.

What is the role of reinforcement learning in training character AI bots?

Reinforcement learning can be used to fine-tune the bot’s behavior by rewarding it for generating responses that are deemed desirable and penalizing it for undesirable responses. This allows the bot to learn from its mistakes and improve its conversational skills over time.

How do character AI bots maintain consistency in long conversations?

Maintaining consistency is a challenge. Techniques include using memory mechanisms to track the conversation history and implementing rules to ensure that the bot’s responses align with its established personality and knowledge base. Context awareness is critical.

Are character AI bots vulnerable to “jailbreaking” attempts?

Yes, like many AI systems, character AI bots can be vulnerable to “jailbreaking” attempts, where users try to trick the bot into generating content that violates its safety guidelines. Developers are constantly working to improve the robustness of these systems. Security measures are continuously updated.

How will character AI technology evolve in the next few years?

We can expect to see more sophisticated and personalized AI characters, improved safety mechanisms, and greater integration of character AI into various applications, such as education, entertainment, and customer service. More realistic and engaging interactions are anticipated.

What is the difference between a character AI bot and a chatbot?

While both are conversational AI systems, a chatbot typically focuses on providing specific information or completing tasks, while a character AI bot aims to simulate a distinct personality and engage in more open-ended conversations. Character AI bots prioritize personality and engagement.