How Are Self-Driving Cars Dangerous? Exploring the Risks of Autonomous Vehicles

How are self-driving cars dangerous? Despite their promise of safer roads, self-driving cars pose risks due to software glitches, sensor limitations, and ethical dilemmas, potentially leading to accidents, system failures, and unpredictable behavior in complex scenarios.

Introduction: The Promise and Peril of Autonomous Driving

The automotive industry is undergoing a monumental transformation, driven by the relentless pursuit of autonomous driving. Self-driving cars, once relegated to the realm of science fiction, are rapidly becoming a tangible reality, promising a future with fewer accidents, increased mobility for the elderly and disabled, and more efficient transportation systems. However, this technological leap is not without its challenges and inherent dangers. Understanding these risks is crucial for ensuring the safe and responsible deployment of self-driving technology. This article delves into the various ways how are self-driving cars dangerous, exploring both the technical and ethical dimensions of this emerging technology.

The Core Technology: Sensors and Software

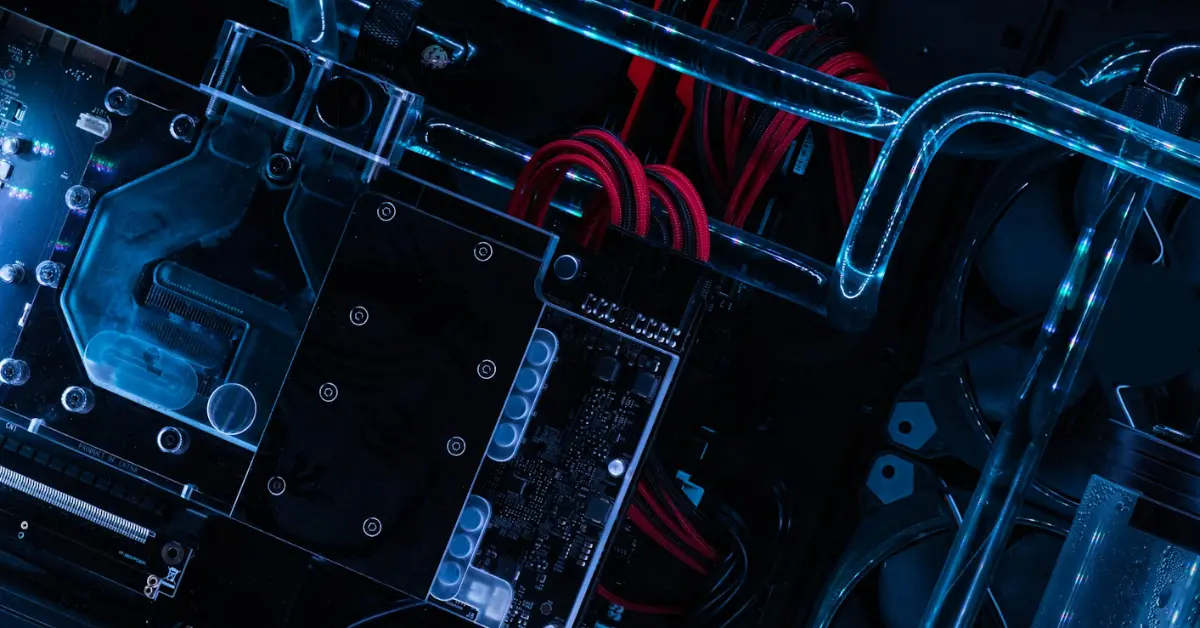

Self-driving cars rely on a complex interplay of sensors, software, and powerful computing platforms. These systems work together to perceive the environment, make decisions, and control the vehicle. Understanding the limitations of these technologies is key to understanding the risks.

- Sensors: LIDAR, radar, cameras, and ultrasonic sensors provide the vehicle with a 360-degree view of its surroundings.

- Software: Sophisticated algorithms process sensor data, identify objects, predict their behavior, and plan the vehicle’s trajectory.

- Computing Platform: Powerful processors and GPUs execute the software and control the vehicle’s actuators (steering, acceleration, braking).

Points of Failure: Where Things Can Go Wrong

The complexity of self-driving systems means there are multiple points where failures can occur. These failures can lead to accidents or unexpected behavior.

- Sensor Limitations: Sensors can be affected by weather conditions (rain, snow, fog), lighting conditions (glare, darkness), and occlusion (objects blocking the view). LIDAR, for example, can be confused by reflective surfaces.

- Software Glitches: Software errors, bugs, and vulnerabilities can lead to incorrect decisions or system failures. This is especially true for AI models that are not rigorously tested and validated.

- Communication Issues: Self-driving cars often rely on communication with other vehicles and infrastructure. Failures in these communication networks can disrupt the system’s ability to navigate safely.

- Cybersecurity Risks: Self-driving cars are vulnerable to cyberattacks. Hackers could potentially take control of the vehicle, manipulate its sensors, or steal sensitive data.

Ethical Dilemmas: The Trolley Problem on Wheels

Self-driving cars will inevitably face ethical dilemmas where they must choose between different courses of action, each with potentially harmful consequences. These “trolley problem” scenarios highlight the moral complexities of autonomous decision-making.

- The Classic Trolley Problem: Should the car prioritize the safety of its passengers or the safety of pedestrians in a situation where an accident is unavoidable?

- Programming Values: How should ethical principles be encoded into the car’s software? Should it prioritize minimizing the total number of injuries, or should it prioritize the safety of its occupants?

- Liability and Responsibility: Who is responsible in the event of an accident caused by a self-driving car? The manufacturer, the software developer, or the owner of the vehicle?

Common Mistakes and Misconceptions

Many misconceptions surround the capabilities and limitations of self-driving cars. Understanding these misconceptions is important for managing expectations and avoiding risky behavior.

- Over-Reliance on Automation: Drivers may become over-reliant on the self-driving system and fail to monitor the vehicle’s performance, leading to accidents when the system disengages.

- Assuming Full Autonomy: Many people incorrectly believe that self-driving cars are capable of handling any situation. In reality, current systems have limitations and require human intervention in certain circumstances.

- Ignoring System Warnings: Drivers may ignore warnings or alerts from the self-driving system, potentially leading to accidents.

- Driving in Unsuitable Conditions: Attempting to use self-driving features in adverse weather conditions or complex traffic situations can be dangerous.

How to Mitigate the Risks

Several strategies can be employed to mitigate the risks associated with self-driving cars.

- Rigorous Testing and Validation: Extensive testing in simulated and real-world environments is crucial for identifying and correcting software errors and sensor limitations.

- Redundancy and Fail-Safe Mechanisms: Implementing redundant systems and fail-safe mechanisms can help prevent accidents in the event of component failures.

- Cybersecurity Protections: Robust cybersecurity measures are essential for protecting self-driving cars from cyberattacks.

- Human Oversight and Training: Drivers should be properly trained on the capabilities and limitations of self-driving systems and should be ready to take control of the vehicle when necessary.

- Clear Regulations and Standards: Clear regulations and industry standards are needed to ensure the safe and responsible deployment of self-driving technology.

Regulation and the Legal Landscape

The legal landscape surrounding self-driving cars is still evolving. Many jurisdictions are grappling with how to regulate these vehicles and assign liability in the event of accidents. Establishing clear legal frameworks is essential for fostering public trust and promoting innovation.

| Aspect | Current Status | Future Considerations |

|---|---|---|

| Liability | Often unclear, varies by jurisdiction | Clear guidelines needed for assigning responsibility in different accident scenarios |

| Testing Permits | Requirements vary significantly | Standardized testing protocols and permits across jurisdictions |

| Data Privacy | Concerns about data collection and usage | Regulations to protect user data and ensure transparency |

| Ethical Standards | Largely undefined | Development of ethical guidelines for programming autonomous vehicle behavior |

Frequently Asked Questions (FAQs)

Are self-driving cars truly safer than human drivers?

While the long-term goal is to achieve significantly safer roads, current self-driving technology is not yet demonstrably safer than experienced, attentive human drivers. Data is still being collected and analyzed, and the technology is constantly evolving. The potential for fewer accidents exists, but presently, it is crucial to acknowledge the inherent risks and limitations.

What happens when a self-driving car encounters an unexpected situation?

When a self-driving car encounters a situation it is not programmed to handle, it typically attempts to either disengage the autonomous system, requiring human intervention, or execute a pre-programmed safety maneuver, such as bringing the vehicle to a controlled stop. The success of this response depends on the severity and complexity of the unexpected situation.

How reliable are the sensors used in self-driving cars?

The reliability of sensors varies depending on the type of sensor and the environmental conditions. While generally robust, sensors can be affected by weather, lighting, and other factors, potentially leading to inaccurate or incomplete data. This is why sensor fusion (combining data from multiple sensors) is crucial for ensuring reliable perception.

What is the role of artificial intelligence (AI) in self-driving cars?

AI plays a crucial role in self-driving cars, enabling them to perceive their environment, make decisions, and control the vehicle. Machine learning algorithms are used to train the car to recognize objects, predict their behavior, and plan its trajectory. However, the reliability and safety of these AI systems are paramount.

How are self-driving cars tested for safety?

Self-driving cars undergo extensive testing in simulated and real-world environments. Simulations allow for testing in a wide range of scenarios, including edge cases and hazardous situations. Real-world testing involves driving on public roads with safety drivers who can take control if necessary.

What are the cybersecurity risks associated with self-driving cars?

Self-driving cars are vulnerable to cyberattacks, including hacking, malware, and denial-of-service attacks. Hackers could potentially take control of the vehicle, manipulate its sensors, or steal sensitive data. Robust cybersecurity measures are therefore essential for protecting these vehicles.

How will self-driving cars affect employment in the transportation industry?

The widespread adoption of self-driving cars is likely to have a significant impact on employment in the transportation industry. While some jobs, such as truck drivers and taxi drivers, may be eliminated, new jobs will be created in areas such as software development, sensor maintenance, and autonomous vehicle support.

What are the privacy implications of self-driving cars?

Self-driving cars collect vast amounts of data about their surroundings and the behavior of their occupants. This data could potentially be used to track individuals, infer their personal preferences, or discriminate against them. Strong privacy regulations are needed to protect user data and ensure transparency.

Who is liable in the event of an accident involving a self-driving car?

Determining liability in the event of an accident involving a self-driving car is a complex legal issue. Depending on the circumstances, liability could fall on the manufacturer of the vehicle, the software developer, the owner of the vehicle, or even the passenger. The legal frameworks for assigning liability are still evolving.

How will self-driving cars interact with human drivers on the road?

The interaction between self-driving cars and human drivers will be a critical factor in the safety and efficiency of future transportation systems. Self-driving cars will need to be able to anticipate the behavior of human drivers and react appropriately. Clear communication protocols and traffic rules will be essential.

What are the potential benefits of self-driving cars?

Despite the risks, self-driving cars offer numerous potential benefits, including reduced traffic accidents, increased mobility for the elderly and disabled, improved fuel efficiency, and reduced traffic congestion. These potential benefits are driving the ongoing development and deployment of autonomous driving technology.

How is society preparing for the widespread adoption of self-driving cars?

Society is preparing for the widespread adoption of self-driving cars through research and development, regulatory efforts, infrastructure investments, and public education campaigns. These efforts are aimed at ensuring the safe, responsible, and equitable deployment of this transformative technology.

By understanding how are self-driving cars dangerous, and by actively working to mitigate these risks, society can pave the way for a future where autonomous vehicles enhance safety, efficiency, and accessibility on our roads.