Can the Creators of C AI See Your Messages?

The question of data privacy is paramount in the age of AI. While absolute certainty is difficult to guarantee, the creators of consumer-facing AI models generally can access your messages, though access is typically limited, audited, and subject to strict ethical and legal guidelines.

The Rise of Conversational AI and Data Privacy

Conversational AI (C AI) has become ubiquitous, powering chatbots, virtual assistants, and personalized experiences across countless platforms. This proliferation raises critical questions about data privacy. With every interaction, we’re essentially sharing information with AI systems, prompting concerns about who has access to this data and how it’s being used. Understanding the potential for access and the safeguards in place is crucial for informed participation in the AI ecosystem.

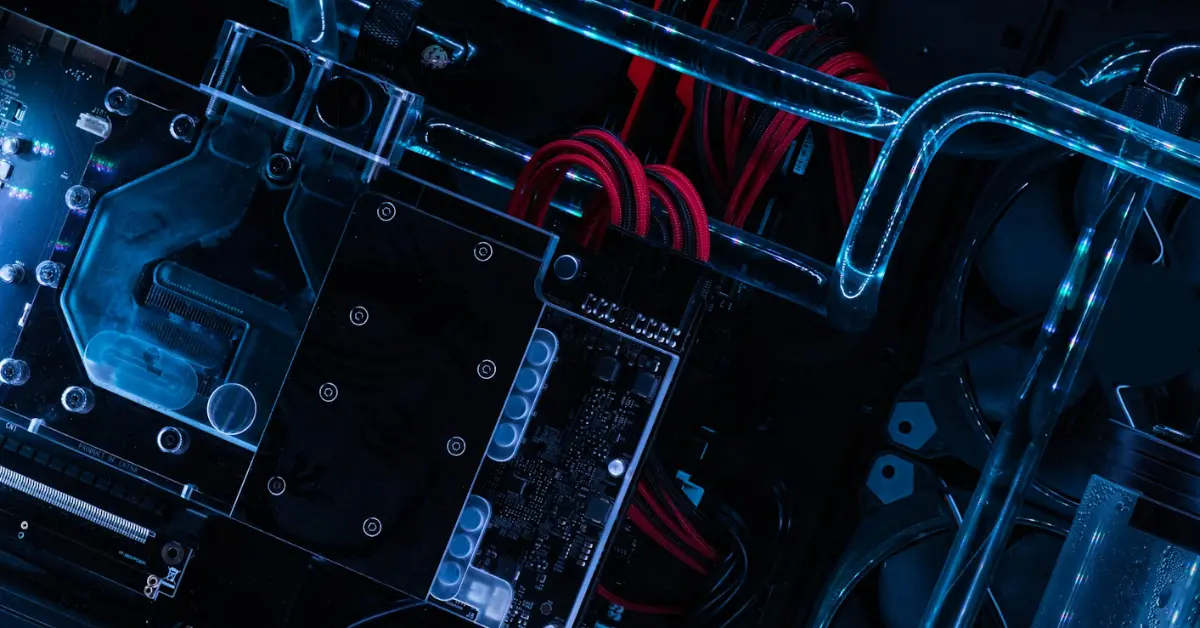

The Architecture of C AI and Data Handling

To understand whether the creators of C AI can see your messages, we need to examine the typical architecture of these systems. Broadly, they consist of:

- Input Layer: This is where user messages are received, be it through text, voice, or other modalities.

- Processing Engine: This involves natural language processing (NLP) algorithms, machine learning models, and databases that interpret and respond to user queries.

- Output Layer: This generates the AI’s response, displaying it to the user.

- Data Storage: A crucial element. User interactions, along with metadata, are often stored for model training, improvement, and debugging.

It is within this data storage layer that the most significant privacy concerns reside.

Why User Data is Collected and Used

While the idea of your conversations being scrutinized might be unsettling, there are legitimate reasons why user data is collected and used by the creators of C AI:

- Model Training: AI models learn from data. Analyzing user interactions allows developers to refine algorithms, improve accuracy, and enhance the overall user experience. More data generally leads to better AI.

- Bug Fixing and Troubleshooting: Analyzing conversations helps identify errors in the AI’s responses, pinpoint biases, and address technical issues.

- Product Improvement: Understanding how users interact with the AI helps identify areas for improvement and new features to develop.

- Abuse Detection: AI systems can be used for malicious purposes. Monitoring conversations helps identify and prevent abuse.

The Security Measures in Place

While access is possible, reputable AI companies implement numerous security measures to protect user data:

- Encryption: Data is typically encrypted both in transit and at rest, making it difficult for unauthorized parties to access.

- Access Controls: Strict access controls limit who within the company can access user data.

- Anonymization and Pseudonymization: Data may be anonymized or pseudonymized, removing or replacing personally identifiable information (PII) with codes or identifiers.

- Data Retention Policies: Companies establish policies outlining how long user data is stored and when it is deleted.

- Regular Audits: Internal and external audits help ensure compliance with data privacy regulations and security protocols.

The Role of Data Privacy Regulations

Stringent data privacy regulations like GDPR (General Data Protection Regulation) and CCPA (California Consumer Privacy Act) play a crucial role in protecting user data. These regulations mandate:

- Transparency: Companies must be transparent about how they collect, use, and share user data.

- User Consent: In many cases, companies must obtain explicit consent from users before collecting their data.

- Data Minimization: Companies should only collect the data that is necessary for the stated purpose.

- Right to Access, Rectification, and Erasure: Users have the right to access their data, correct inaccuracies, and request its deletion.

The Risks and Mitigation Strategies

Despite these safeguards, risks remain:

- Data Breaches: A data breach could expose user conversations to unauthorized individuals.

- Misuse of Data: While unlikely for reputable companies, there’s a potential for data to be used for purposes beyond those disclosed in the privacy policy.

- Bias Amplification: If the data used to train the AI reflects existing biases, the AI may perpetuate and amplify those biases.

To mitigate these risks:

- Choose Reputable AI Providers: Opt for companies with a strong track record of data privacy and security.

- Review Privacy Policies Carefully: Understand how your data is being collected, used, and shared.

- Adjust Privacy Settings: Many AI platforms offer privacy settings that allow you to control the level of data collection.

- Be Mindful of What You Share: Avoid sharing sensitive personal information with AI systems.

Comparison of Data Access by Different AI Providers

| Provider | Data Access Policy | Data Security Measures | Compliance |

|---|---|---|---|

| Major AI Company A | Limited access, audited logs, role-based access control | Encryption, anonymization, penetration testing | GDPR, CCPA |

| Major AI Company B | Data access for model training, anonymized datasets | Encryption, firewalls, intrusion detection systems | GDPR, CCPA, HIPAA (where applicable) |

| Open-Source AI Project | Data access may vary depending on deployment | User-defined security measures | Compliance responsibility lies with the user |

| Small AI Startup | More variable, check individual privacy policy | May have less robust security measures | Varies, potential for non-compliance |

Frequently Asked Questions (FAQs)

What types of messages are most likely to be accessed by creators of C AI?

While the details are often proprietary, conversations flagged for potential abuse, technical errors, or used to improve model performance are more likely to be reviewed. Regular day-to-day chats are typically not actively monitored unless triggered by specific keywords or algorithms.

Are my conversations with C AI considered confidential?

Generally, conversations are not considered strictly confidential in the same way communications with a doctor or lawyer are. While companies strive to protect user data, the sheer volume of interactions and the need for model training mean absolute confidentiality cannot be guaranteed.

How can I find out what data an AI company has collected about me?

Many data privacy regulations grant users the right to access their data. Check the AI company’s privacy policy for instructions on how to request a data access report. The process usually involves submitting a formal request through their website or customer support.

Can I opt-out of having my conversations used for model training?

Some AI platforms offer an option to opt-out of having your conversations used for model training. However, this might impact the AI’s ability to personalize its responses. Review the platform’s privacy settings to see if this option is available.

What happens to my data when I delete my account with an AI service?

Data deletion policies vary. Some companies immediately delete all user data upon account deletion, while others may retain anonymized data for a limited period for analytical purposes. Consult the company’s privacy policy for specific details.

How do I know if an AI company is compliant with GDPR or CCPA?

Look for statements of compliance with GDPR or CCPA on the company’s website or in its privacy policy. These statements typically outline the steps the company has taken to comply with these regulations. Compliance certifications can also be a good indicator.

What should I do if I suspect an AI company has misused my data?

If you suspect an AI company has misused your data, contact the company’s data protection officer (DPO) or privacy team. You can also file a complaint with the relevant data protection authority in your jurisdiction.

Are there any AI platforms that prioritize privacy more than others?

Yes, some AI platforms prioritize privacy by design. These platforms often use federated learning, differential privacy, and other privacy-enhancing technologies to minimize data collection and protect user privacy. Research platforms that specifically advertise privacy-focused features.

Is voice data more vulnerable than text data?

Voice data can be more vulnerable due to its biometric nature. Voiceprints can be used to identify individuals, even if the content of the conversation is anonymized. Therefore, ensure that the AI platform you are using has robust security measures to protect voice data.

What are the potential risks of using open-source C AI models?

While open-source models offer transparency, they also come with risks. Security depends heavily on the user’s implementation. The creators of C AI (in this case, the community of developers) may not have direct access to your data unless you are contributing it to the community for training or research, but the risk of vulnerabilities increases if the system is not carefully configured. Due diligence is critical.

Can companies sell my conversations with C AI to third parties?

Most reputable companies explicitly prohibit selling user data to third parties for marketing or advertising purposes. However, they may share anonymized or aggregated data with partners for research or analytical purposes. Check the company’s privacy policy for details.

What is the future of data privacy in the age of C AI?

The future of data privacy in the age of C AI will likely involve stricter regulations, more privacy-enhancing technologies, and increased user awareness. Developing AI that respects user privacy will be essential for building trust and fostering the responsible adoption of AI. The question of can the creators of C AI see your messages is constantly evolving as technology and regulations advance.